PLBART/sentencepiece/dict.txt at main · wasiahmad/PLBART · GitHub

Official code of our work, Unified Pre-training for Program Understanding and Generation [NAACL 2021]. - PLBART/sentencepiece/dict.txt at main · wasiahmad/PLBART

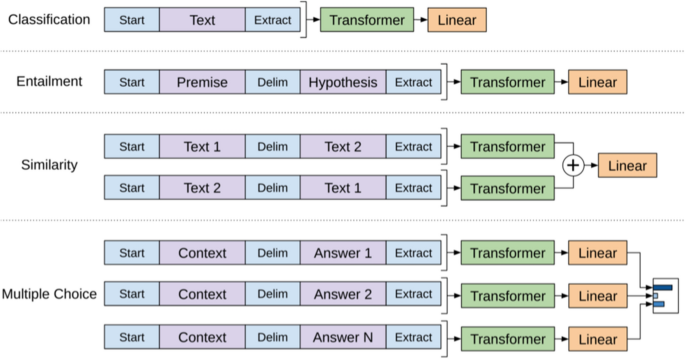

Bringing order into the realm of Transformer-based language models for artificial intelligence and law

守玄齋の2✕8サイズ紙 夾宣 3反

SMart[スマート] JOINT_003 口枷 RED - 口枷

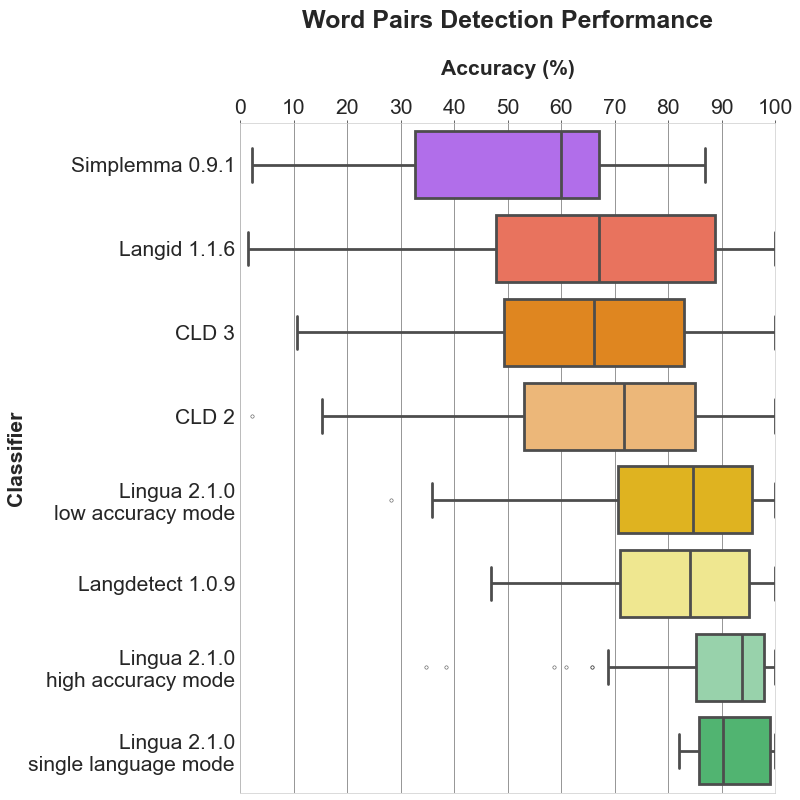

GitHub - pemistahl/lingua-py: The most accurate natural language detection library for Python, suitable for short text and mixed-language text

Wild Gent柳の葉一本鞭 - 鞭・ロウソク

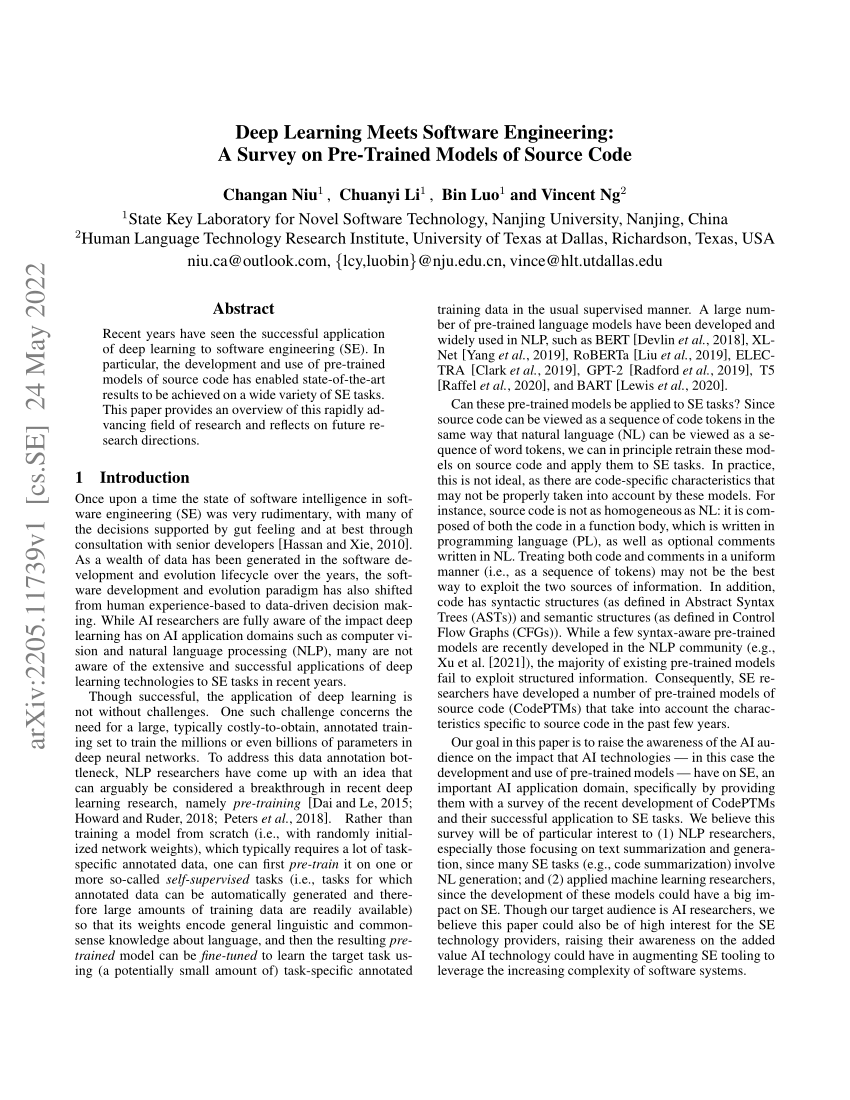

PDF) Deep Learning Meets Software Engineering: A Survey on Pre-Trained Models of Source Code

using directMention() documentation doesn't seem to be correct in docs · Issue #1148 · slackapi/bolt-js · GitHub

Wild Gent柳の葉一本鞭 - 鞭・ロウソク

deprecate `pub` command · Issue #2736 · dart-lang/pub · GitHub

マ㾭 - 工具

守玄齋の2✕8サイズ紙 夾宣 3反

GitHub - leabhart/Maldocs: Scripting together some of my favorite Python tools for doing initial triage of a suspected malicious document (e.g. PDF, DOC, DOCX, XSLM, etc.)

海外 正規品】 ♡ つけ爪/ネイルチップ

Question] SyntaxError: Parsing JSON at

BART for Pre-Training · Issue #6743 · huggingface/transformers · GitHub